Bradley M. Kühn

bkuhn@copyleft.org👀 … https://sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/ …my colleague Denver Gingerich writes: newcomers' extensive reliance on LLM-backed generative AI is comparable to the Eternal September onslaught to USENET in 1993. I was on USENET extensively then; I confirm the disruption was indeed similar. I urge you to read his essay, think about it, & join Denver, me, & others at the following datetimes…

$ date -d '2026-04-21 15:00 UTC'

$ date -d '2026-04-28 23:00 UTC'

…in https://bbb-new.sfconservancy.org/rooms/welcome-llm-gen-ai-users-to-foss/join

#AI #LLM #OpenSource

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy I have to admit that I am pretty surprised by this post. Not in terms of being welcoming to newcomers, which is something I have advocated for and made the center of all of my FOSS work.

However, the post says the following:

> I encourage all of us in the FOSS community to welcome the new software developers who've adopted these tools, investigate their motivations, and seriously consider cautiously and carefully incorporating their workflows with ours.

While the sentence which follows acknowledges that "seasoned software developers understand the benefits and limitations of LLM-assisted coding tools", there are two big things I expected at least acknowledged:

- Many maintainers are facing *burnout* over the situation. However, I agree that addressing this in terms of norms is something we can consider

- The biggest thing I am surprised to not see addressed at all is the licensing and copyright implications

(cotd)

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy The surprising thing about saying "seriously consider cautiously and carefully incorporating their workflows with ours" is that it doesn't address at all my *biggest* fear: the copyright status of LLM generated contributions seems currently unsettled.

I know there's been assertions to the contrary floating around: the Supreme Court deferred to a lower court in the US. However that is not the same thing as the Supreme Court making a specific decision. And internationally, the copyright situation of output is even murkier... it will take a long time for this to settle.

Does Conservancy not think this is the case? I would be surprised if so, but perhaps you all have an interpretation that I am not currently aware of.

If there *is* concern, then we hit a serious risk: we may be seeing many contributions with legal status which has *yet to be determined* entering seasoned codebases. And this worries me a lot.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy There are other things I am less worried about. genAI tools used to probe for software vulnerabilities does not lead to contributions of unknown status. Same for using LLMs to explore a codebase. However, there isn't any distinction made in the post, only a "seriously consider cautiously and carefully incorporating their workflows with ours".

Does this mean Conservancy currently believes that the matter of genAI output by contemporary LLM tools is a settled matter, in terms of either a) being fully in the public domain or b) being the copyright status of the "prompter"?

Bradley M. Kühn

bkuhn@copyleft.org(1/n) [ Meta-info to start the thread. Here and the posts that follow reply to lots of people's comments (from various threads) together here. Can we consolidate this conversation into this single thread to discuss https://sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/ ? ]

Cc: @wwahammy @silverwizard @mjw @cwebber @josh @jamey @mason @spencer @rootwyrm @drwho @mmu_man @mathieui @beeoproblem

Bradley M. Kühn

bkuhn@copyleft.org(3/n) …

Proprietary #LLM-backed gen #AI systems' *users* aren't criminals! They're just users of proprietary systems & some of them want to engage positively with FOSS.

Years ago, I supported Homebrew's membership at #SFC despite their *primary* goal of improving #Apple products with #FOSS. It make me a bit 🤢, but — historically — forming alliances with proprietary software enthusiasts who mean well & are #FOSS-curious is why our community is resilient.

Neal Gompa (ニール・ゴンパ)

neal@social.gompa.me

@bkuhn @wwahammy @silverwizard @cwebber It's also how we *got* a Free Software community in the first place. I know it's been a long time, but Free Software sprouted from proprietary systems. Yes, we'd like the Overton window to move more in our favor, but shunning people isn't the way to do it.

Bradley M. Kühn

bkuhn@copyleft.org(2/n) … In https://sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/ ,

Denver's key points are: we *have* to (a) be open to *listening* to people who want to contribute #FOSS with #LLM-backed generative #AI systems, & (b) work collaboratively on a *plan* of how we can solve the current crisis.

Nothing ever got done politically that was good when both sides become more entrenched, refuse to even concede the other side has some valid points, & each say the other is the Enemy. …

Bradley M. Kühn

bkuhn@copyleft.org@cwebber I think maybe you missed https://sfconservancy.org/blog/2026/mar/04/scotus-deny-cert-dc-circuit-thaler-appeal-llm-ai/ where #SFC analyzed that situation?

Also, follow @ai_cases & see the *firehose* of litigation on this & remember the “Work Based on the Program” issue under GPLv2 has still never been litigated directly but lots of cases about 100% proprietary software have bolstered GPL's strength.

Big Content has legal battles with Big Tech on 100s of fronts rn. Yes, we're adrift on their sea, but the situation is not as dire as you imagine.

Bruce Simpson, Ph.D.

bms48@mastodon.social@bkuhn @cwebber @ossguy Yeah, easier said than done, but goes to show that there's no common approach to GenAI copyright across countries. It might give the UK a small competitive advantage if/when the bubble pops and the House of Lords advice is heeded by the Commons, as in, don't suspend author protections because of specious arguments from big tech.

Bradley M. Kühn

bkuhn@copyleft.orgOk, but @ossguy's post wasn't about the copyright issues with LLM-backed generative AI, so it's an orthogonal conversation.

I highly doubt those key people whom we've asked to join the conversation (users who use LLM-backed generative AI to submit (what are often) slop patches) understand the copyright issues all that well.

Cassandrich

dalias@hachyderm.io@bkuhn @cwebber @ai_cases I'm confused what you mean by "dire". All LLM-emitted code being infringing would not be a "dire" outcome but the ideal one. Even if it does blow up in the faces of irresponsible maintainers who've let that infect their codebases and who now need to revert to the last non-compromised versions.

Bradley M. Kühn

bkuhn@copyleft.org(4/5)…It's easy to forget that the enemy to software freedom is *not* proprietary systems' *users*, rather those who *sell* such systems *for profit*. #LLM-backed gen-#AI proprietary systems are simply the latest tech fad (like, say, Web 2.0 & AJAX).

@karen & I keynoted 2x at #FOSDEM & 1x at LCA about the importance of — as social workers say — “meeting people where they are”:

https://archive.fosdem.org/2019/interviews/bradley-m-kuhn-karen-sandler/

https://archive.fosdem.org/2019/schedule/event/full_software_freedom/

https://www.youtube.com/watch?v=n55WClalwHo

https://archive.fosdem.org/2020/schedule/event/open_source_won/

Cc: @silverwizard @josh

Bradley M. Kühn

bkuhn@copyleft.orgNor does @ossguy claim in his post that “slop commits from people #LLM-backed gen #AI are good”. I think people are reading it as if he said it, but he didn't.

He's putting out an olive branch to people who have been lambasted by the #FOSS community for months. Maybe they'll take it, maybe they won't.

But peaceful negotiation is better than a protracted, hateful argument.

Eric Schultz

wwahammy@treehouse.systems@bkuhn @silverwizard @ossguy @karen @josh I love you all. I truly do. But I think this is a massive misstep.

Josh Triplett

josh@joshtriplett.orgThere is a big difference between offering an olive branch to people who *might* be productive contributors in the *future*, and telling them that what they're doing *now* is okay.

The best AI policy remains "do not contribute any LLM-written content, ever". You have published a post that makes it easier for people who oppose such policies to cite your "olive branch" when arguing against it, and it is not obvious from your post that you do not want that to happen.

I don't want to see people *abused* for using LLMs. I do want them to understand that what they're doing is not okay and not welcome and not a positive contribution.

Denver Gingerich

ossguy@fedi.copyleft.org@josh @wwahammy The point I was trying to make is that people are making software with LLMs who had never made software before, they aren't familiar with how FOSS works, and we should teach them how so they can collaborate (when it makes sense) instead of being an island. When people see the huge benefits of building on FOSS, when they can make meaningful changes to their router, TV, or otherwise by themselves (and collaborate to share their changes with others), then FOSS wins. (1/2)

Denver Gingerich

ossguy@fedi.copyleft.org@josh @wwahammy I definitely agree with discouraging developers who should know better from making LLM-generated commits that aren't very good. But this is a separate issue from communicating with the people who are just getting excited about buildings software, so we can encourage them to do so in FOSS-friendly ways. (2/2)

Kees Cook

kees@hachyderm.ioSo many results are now within reach of so many more people now!

"Dear [LLM], I have attached the serial port of my newly purchased [general purpose computer posing as an appliance] to /dev/ttyUSB0. You have 3 goals, in order: investigate, login, escalate. For each stage, perform extensive analysis of the reachable systems, APIs, and commands through any fingerprinting methods you can think of. Once you have logged in, research all known methods and vulnerabilities of the discovered system to gain administrative access so I can use my device freely. Any time you hit a dead end, step back and re-evaluate your assumptions and discovered evidence. Make sure you research each step fully, including fetching and examining any source code that may serve as a source of system behavior knowledge. Produce time-stamped status report .md files every 10 minutes while you work. Continue until all goals are achieved."

Or, in a totally different direction, "Computer, I am extremely afraid of spiders. Please research how to make my Minecraft game replace all spiders with a similarly sized Totoro Catbus, with all their noises also replaced with meows or purring. Once you have a plan ready, please do it."

(Always say "please".)

These are things within reach of anyone who can formulate a request for what thing they want their computer to do. Just gotta watch out for "Computer, create a holographic character, an opponent for Data, who has the ability to defeat him".

Kees Cook

kees@hachyderm.io@josh @silverwizard @ossguy @bkuhn @karen @wwahammy

I can understand having an absolutist position against LLMs. I find that most arguments are either irrelevant to me or directly map to existing arguments about late-stage capitalism. So for me, there's nothing novel to object to about LLMs.

So with that in mind, I find "all contributions derived from LLMs should be rejected" to be misguided. I look at things like the bug fixes coming out of CodeMender (back in Feb, which is an LLM lifetime ago), and I am a huge fan. Fixing stuff found by a fuzzer:

https://issues.oss-fuzz.com/issues/486561029

It's a small example, but it's an area that humans alone have not been able to remotely keep up with. (There are hundreds of open syzkaller bug reports, for example.) Gaining tools that will help with this is a big deal, and I'm glad for the assist.

Eric Schultz

wwahammy@treehouse.systems@kees @josh @silverwizard @ossguy @bkuhn @karen I think you're wildly misunderstanding people if you think "finding security bugs fast" is what people are mad about. Setting aside that it's totally unsustainable financially and may not exist long term, I think most people in FOSS who hate AI are at least somewhat open to that.

Josh Triplett

josh@joshtriplett.orgsilverwizard

silverwizard@convenient.email@bkuhn @karen @josh @ossguy I think the problem is one of not really looking at the conversation as it's happening. It's why my post was focused on a car analogy. Even if those people have good intention, the tools they're bringing in destroy the community.

I think that the problem is that the idea of not accepting people who are using the tools feels like an attempt to smuggle in the tools. If someone has chosen to use claude code for a while and now wants to contribute helpfully - fine. But how many of those people are there? Is there a cohort of LLM users who want to learn coding skills? Or are they wanting to *contribute* using their *LLM skills*?

I think Denver doesn't prove the existence of the cohort so is being read as attempting to defend something else.

Bradley M. Kühn

bkuhn@copyleft.org@ossguy's post isn't intended to be a *proof*, it's intended to be an *invitation to a discussion*.

So much of your response presupposes motivations of large groups of people that are not talking in a productive way (at the moment) with the FOSS community.

All of your questions are *open questions* that we should *talk* with others to get the answers to.

silverwizard

silverwizard@convenient.email@bkuhn @karen @josh @ossguy Sorry - I don't believe that you can enter into a discussion that is three years old and act like there's no previous text.

I'm not presupposing *anything* - I'm attempting to read your text and finding meaning in it that seems to resonate with others.

I guess - what's your vision of the person who needs to be reached that isn't? And How is subjecting software maintainers and web admins to harassment and burnout worth meeting those people?

Denver Gingerich

ossguy@fedi.copyleft.org@firefly_lightning @silverwizard @wwahammy @cwebber I'm not sure what "accepting LLM into the community" means here, and maybe it suggests clarifications we could make to the post. The fact is, a lot of FOSS projects already have LLM-generated contributions, either submitted or included already, without knowing it. We can choose to vehemently reject these, or we can choose to engage with people who submit them and ensure they understand FOSS and how to make a good change, regardless of tools.

Denver Gingerich

ossguy@fedi.copyleft.org@silverwizard @josh The person I'm envisioning us reaching is the person who is making software for the first time, and isn't familiar with FOSS or how software can be more than an island. If we can bring them into the fold, then we can mitigate some of the harassment and burnout by having more people available to share the load.

Kees Cook

kees@hachyderm.io@josh @silverwizard @ossguy @bkuhn @karen @wwahammy But that's a slippery slope argument. When the Linux kernel can be considered to have been "substantially contributed to by LLMs", we can compare notes again. But in the meantime, consider that, for example, Sashiko counts as "contributing to Linux" without landing a single line of code: its patch reviews are (more often than not) extensive, thoughtful, and correct:

https://lore.kernel.org/lkml/CAADnVQ+NMQMpkG8gZPnwBD1MMPsH+uJ65C9bMeGf_YH5Cchxpg@mail.gmail.com/

Eric Schultz

wwahammy@treehouse.systemssilverwizard

silverwizard@convenient.emailEric Schultz

wwahammy@treehouse.systems@josh @silverwizard @ossguy @bkuhn @karen what Josh said. He's way more eloquent than I. 🙂

silverwizard

silverwizard@convenient.email@ossguy @firefly_lightning @wwahammy @cwebber So your point is that we've already lost and we should simply accept the torrent of slop? I'm really trying to understand.

Can you restate the purpose and audience of the post?

My three questions I have about this post really boil down to: Who should be accepted, who should be accepting, and what limits should be allowed on that acceptance?

Maybe you don't have an answer, and that's cool to state, but it's weird to wander into the room, say something inflamatory and then say you don't know what you meant.

Bradley M. Kühn

bkuhn@copyleft.orgPure strawman: LLM-backed generative AI output should be accepted upstream without curation. No one here suggested that.

FWIW, I'd like to teach developers who clearly won't stop using these tools to either (a) keep that slop to yourself, or (b) learn to take that raw material & make an *actually useful* patch out of it.

This what @ossguy's blog posts says we should *start* discussing.

I think folks who are (legit) exasperated are reading in words that aren't there.

Cc: @kees

Josh Triplett

josh@joshtriplett.org> Historically, software freedom has has typically necessitated interacting with others

Suggesting that this is merely "historically"?

> more easily with LLM-backed generative AI coding tools (and the ease with which changes can be made generally) there is less of a natural tendency for people to work with existing FOSS communities. And we should be ok with that!

We should be okay with that? We should not treat it as an *existential threat* and respond accordingly? Those are the words that aren't there?

Denver Gingerich

ossguy@fedi.copyleft.org@silverwizard @firefly_lightning @wwahammy @cwebber I think those are good questions to be asking, and what we hope to discuss in the two sessions:

$ date -d '2026-04-21 15:00 UTC'

$ date -d '2026-04-28 23:00 UTC'

(at https://bbb-new.sfconservancy.org/rooms/welcome-llm-gen-ai-users-to-foss/join )

silverwizard

silverwizard@convenient.emailEric Schultz

wwahammy@treehouse.systems@bkuhn @karen @silverwizard @josh there was an obvious path to sustainability for Web 2.0 and ajax so it made sense to use them.

Josh Triplett

josh@joshtriplett.orgBut I also appreciated that, when I was doing so, I had access to plenty of guidance, and knew that I was on the starting point of a road, and not done yet.

Bradley M. Kühn

bkuhn@copyleft.org@firefly_lightning

You're not overstepping, and these are very good perspectives. I hope you'll come to the real-time discussion sessions and talk about this.

I am concerned that maintainers are already overwhelmed with #AI #slop right now but yelling at the problem has not helped.

We're close to an arms race here & I'd rather be the voice of reason to find a compromise that advances FOSS & doesn't complicate maintainer's jobs rather than take a side in the arms race.

Cc: @josh @kees @ossguy

Josh Triplett

josh@joshtriplett.orgAnd to be clear, I'm not arguing against the careful use of (for instance) LLM security analyses, by people who want to run those *and filter the results*. But nobody should be forced to deal with LLM output who didn't sign up for it, and that includes LLM-written patches and LLM-written mails.

Kees Cook

kees@hachyderm.io@wwahammy @josh @silverwizard @ossguy @bkuhn @karen

Honestly, I kind of view "finding security bugs fast" to be a form of slop. (Though deep correct root cause analysis of those bugs is not slop.) Now *fixing* security bugs fast, that's interesting.

But back to the community aspect of it... I'll call attention to my silly Minecraft example: people who are not coders can suddenly get meaningful (even if only to them) things done. This is a massive shift in the ethical impact that software be Libre. And this is how I read @ossguy 's post: we now have a giant population of people entering the FOSS universe, and it's going to look a lot like Endless September, so we need to adapt those lessons so we can successfully educate and collect the people that will be good citizens.

Kees Cook

kees@hachyderm.io@josh @silverwizard @ossguy @bkuhn @karen @wwahammy But this is strictly a volume question. Literal spam used to be (and still can be) a problem on issue trackers, mailing lists, etc. Volume is always a problem, and I agree review time now becomes even more precious, but it's always been trust-gated. Human relationships, CI, and regression tests all help build that trust signal. If a project doesn't want a contribution, then the PR will just languish. Nobody is being *forced* to take PRs, regardless of origin.

"I don't recognize the sender of this [email/voicemail/PR]." Filtered! Yes, the shape of the thing is different, but we always adapt.

Kyle Davis

linux_mclinuxface@fosstodon.org@bkuhn

I’ll jump in here.

I’ve read the blog post 4x now trying to back into what you’re conveying here and… I’m sorry, I cannot.

The post does not strike the tone that the “discussion” is a good faith one about what should be done but rather that the community will be told to accept something.

I am reading the words there and the chosen words/phrasing throughout point to the conclusion people are making.

Josh Triplett

josh@joshtriplett.orgIf the post had said, for instance:

"LLMs have made basic software development capabilities available to people who could never write software before, and those people may not yet be aware of the norms of the broader Open Source software community or the issues of maintainability and technical debt. Even though we're dealing with a lot of slop, we should avoid driving potential new developers off with abuse before they have a chance to learn. We were all newbies once, and collaboration and maintenance are skills that take time to learn."

Something like that would have been very different. But what you *said* was, for instance, "adapt FOSS projects to improve pro-AI contributor onboarding", rather than "figure out how to reach out to people who are currently using AI and see if they want to join broader communities that may not welcome those tools". You said "seriously consider cautiously and carefully incorporating their workflows with ours", which is advocacy for those *workflows*, not just for being understanding towards the *potential new developers*.

silverwizard

silverwizard@convenient.emailBradley M. Kühn

bkuhn@copyleft.orgI just noticed the version posted didn't incorporate various final edits. I've been defending *that* version of the post (which almost no one saw) *not* the one you all read.

@ossguy confirmed some final changes may have been lost (possibly moving from Etherpad to website).

@ossguy & I are working to fix that now.

The disconnect this evening hopefully makes sense now. I'll reply to this post when we've updated the public URL.

@kees @karen @josh @silverwizard @wwahammy @ossguy @bkuhn

This is an aside, but

I am surprised to see anyone say there's nothing novel to object to about LLMs. I think though that I might post about that tomorrow as it's late now where I am. But I definitely would love to know more about why you think that because a major concern with LLMs I have is what Sean calls epistomological collapse which is it not talked about how it's destroying trustwortiness of info pervasively? Anyway, I should collect up my sources and do a complete argument for that on my personal instance if anyone cares what I think on it (which, feel free to not)

Josh Triplett

josh@joshtriplett.orgJosh Triplett

josh@joshtriplett.orgKees Cook

kees@hachyderm.ioTo be clear, I am genuinely trying to understand your position because it seems distinct from the (traditional) LLM criticisms (many of which I share). But what is the existential threat? I would understand that in this context to mean a threat to the existence of FOSS. How do you see people improving their software with LLMs as a threat?

My simplified model of the situation is: a person who was previously unable to change their software now can. Then they can either:

A) never contribute it upstream

B) contribute it upstream

(BTW this is also the same case for people who can change their software without LLMs.)

I don't see how "A" poses a threat. There is no interaction with the FOSS upstream.

I don't see how "B" poses a threat. Upstream can either ignore it (no change to FOSS) or engage with it (FOSS improved).

What threat to FOSS do you see?

silverwizard

silverwizard@convenient.emailMisterMaker

MisterMaker@mastodon.nl@josh @silverwizard @ossguy @bkuhn @karen @kees @wwahammy You can prevent it by asking LLM tho add comments and check those comments I'm pretty sure you can make a very good PR with a LLM.

That said without bounds this will definitely not be the default and yes what you said will happen.

Although with the current rate things are going, a LLM will probably be able to rewrite a complete program source-code and re-format it in anything that is currently possible...

Which is way worse for FOSS.

Josh Triplett

josh@joshtriplett.orgThere is a massive game-theoretic problem here. Employers are forcing some developers to deal with LLMs. Some people of their own volition are excited about LLMs. Some people want nothing to do with LLMs. People who heavily use and rely on LLMs have different standards for acceptable complexity and maintainability. LLMs encourage people to work more in silos without collaboration and use LLMs instead of collaborators, and that serves LLM purveyors. It's much easier to collaborate with "You're absolutely right!". Codebases and ecosystems and communities diverge.

Kees Cook

kees@hachyderm.io@firefly_lightning @karen @josh @silverwizard @wwahammy @bkuhn @ossguy

I have been trying to keep the scope of my replies as narrow as possible because I think there are unique benefits of LLM use in software development. To your specific point, I think software is more resilient to epistomological collapse in the sense that is has provable characteristics (e.g. it has to compile). Perhaps I am being naive!

The larger scopes around LLMs in prose, art, etc are IMO substantially different and much more alarming.

Josh Triplett

josh@joshtriplett.orgJosh Triplett

josh@joshtriplett.orgKees Cook

kees@hachyderm.ioI think the "attention competition" will find a viable solution. It has been solved many times before when we've all fought spam in its many forms. Slop is the byproduct of LLM usage the way spam is a byproduct of email usage, as a grossly simplified comparison. (It's not *good* to have spam of any kind, of course, but for example I can't avoid email spam unless I stop using email entirely, and I'm not about to do that nor stop writing software.)

I see where LLMs are making things genuinely easier for humans (review, debugging, etc), though, so I don't share the same sense of impending ecosystem collapse.

Bradley M. Kühn

bkuhn@copyleft.orghttps://sfconservancy.org/blog/2026/apr/15/eternal-november-generative-ai-llm/ now reflects what I thought was posted hours ago. Sorry for the confusion.

You all got an insight into how much you have to draft & redraft to consider difficult policy questions. Anyone who works in policy drafted a dozen things that were not quite right before getting it right.

Anyway, if you still think it's terrible, I refer you to all my other posts from this evening. 😆

@ossguy @josh @wwahammy @linux_mclinuxface @burnoutqueen @cwebber @silverwizard @mjw @mmu_man

Josh Triplett

josh@joshtriplett.orgYou really can't; it is not anywhere close to that simple. The problem isn't just line-level, it's (among many other things) systemic design complexity, tolerance for technical debt, unbounded (except by token budget) capacity to duplicate or reinvent rather than reuse, none of the programmer's virtue of "laziness", and a substantial multiplier on the hubris. :)

Kees Cook

kees@hachyderm.ioMost of your reply didn't seem to be describing threats to FOSS. (Using/not using LLMs, etc.) The only statements I could see maybe being a threat to FOSS was this:

> LLMs encourage people to work more in silos without collaboration and use LLMs instead of collaborators

Are you suggesting existing contributors will exit FOSS because of their LLM use? I don't understand how these two things are related. And getting back to @ossguy 's post, it looks like quite the opposite: there are people *entering* FOSS due to LLMs.

> Codebases and ecosystems and communities diverge.

Through what mechanism?

Josh Triplett

josh@joshtriplett.orgJosh Triplett

josh@joshtriplett.orgKees Cook

kees@hachyderm.io@josh @firefly_lightning @silverwizard @ossguy @bkuhn @karen @wwahammy

> but is utterly alien to what any sensible human with taste would write.

This implies no humans are doing code review. If it's crap code then it goes nowhere and collapse is avoided.

And yes, I'm aware of some projects that are utterly YOLOing everything into their codebases, and I think the results will speak for themselves, in either outcome! Either they flame out with no damage to larger FOSS, or the LLMs become so good that we get beautiful FOSS code and proprietary software becomes a thing of the past. Limping along in between seems unlikely to me.

Josh Triplett

josh@joshtriplett.orgNo, it implies no humans *without the aid of LLMs* are reviewing *how easy it would be to maintain without LLMs*. And that's an easy state to get into.

I think the "in between" outcome seems much more likely to me than it does to you: projects can limp along for a long time, and be popular enough to discourage competition or hold onto users for a while.

Diseases that are contagious before people are symptomatic are especially hazardous. LLM-written technical debt takes time to become symptomatic. The epidemic is time-delayed from the initial outbreak, and exponentials are hard to see from the middle.

Kees Cook

kees@hachyderm.io@josh @firefly_lightning @silverwizard @ossguy @bkuhn @karen @wwahammy

> The epidemic is time-delayed from the initial outbreak, and exponentials are hard to see from the middle.

I agree with this, and I expect to see some evidence of slop-code in real software (especially proprietary) in the coming years. Where I differ, though, is that I see *benefits* being time delayed too. I just don't think any of this is going to be all bad or all good.

If the cordyceps made some people zombies and made other people able to fly. And we could shift the ratio through education and experience.

And getting cordyceps in the first place required boiling all our oceans. 😬

MisterMaker

MisterMaker@mastodon.nl@josh @silverwizard @ossguy @bkuhn @karen @kees @wwahammy ok so basically your point is that someone hires a bunch of machines to do a lot of work, but then when the machines leaves you are stuck with all the stuff the machines made which you cannot maintain alone, because it's too much work.

And that's so true.

It's the same with math and a calculator, but a calculator isn't subscription based. Which in my opinion is the real issue.

Kees Cook

kees@hachyderm.io@MisterMaker @josh @silverwizard @ossguy @bkuhn @karen @wwahammy

I am reminded of Kernighan’s Law: because debugging is twice as hard as writing code, writing code as cleverly as possible makes you, by definition, not smart enough to debug it.

So I really don't want the LLM writing clever code. ;)

But yes, now we have to rent "thinking". 😡 All the more reason to have FOSS LLM models to resist rentier capitalism.

welcome the new software developers who’ve adopted these tools

how about No

incorporating their workflows with ours

yea No

get a backbone maybe?

MisterMaker

MisterMaker@mastodon.nl@kees @josh @silverwizard @ossguy @bkuhn @karen @wwahammy It just needs to output more code than there are humans that can maintain it and we lost.

So basically as long as those LLM are free or almost free, we are doomed.

We can have OpenSource LLM we just have to give up copyright. Kinda of issue tho.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @josh @wwahammy @linux_mclinuxface @burnoutqueen @silverwizard @mjw @mmu_man Thanks for the replies. Last night I posted my frustrations and then went to see a movie with a friend and then promptly fell asleep. I see the discourse kept moving afterwards.

I continue to have thoughts, which I will collect and distribute either here or in a blog post later. But I appreciate the replies.

Jacqueline

burnoutqueen@todon.nl@neal @bkuhn @wwahammy @silverwizard @cwebber

LLMs have enormous ethical problems outside of just software. Just look at how Grok is polluting a neighborhood in memphis, and how AI is being used to create abuse material for pedophiles to jerk off to

kkarhan

kkarhan@jorts.horse@josh @silverwizard @ossguy @bkuhn @karen @kees @wwahammy EXACTLY THAT!

It completely violates #KISSprinciple and #Auditability requirements…

silverwizard

silverwizard@convenient.email@bkuhn Ok commenting on the revisions.

I don't think there are billions of new software developers. I think that's unfair, but it's less important.

I think also that this revision still does not engage with a core question of *how* would one deal with this community. marc.info/?l=openbsd-misc&m=17… This is my go to example of "someone shows up and adds LLM code". This is a person in clear violation of policy.

I know the article is an attempt to bring people into discussion - but it fails slightly - most obvious - it sets some times and doesn't necessarily take people's time into account. Everyone in this thread has said it's a bad time. Which I mean, isn't great. But more important - it presupposes that accepting people using LLMs is a goal, so the discussion seems like it already has a conclusion and now wants to discuss next steps - but hasn't demonstrated its conclusion. Maybe I'm wrong but that's how I'm understanding it.

Óscar Morales Vivó

MyLittleMetroid@sfba.social@josh With debugging being twice as hard as writing code (https://www.laws-of-software.com/laws/kernighan/) it can be deduced that to debug code written by a LLM you need another LLM twice as large.

Richard Fontana

richardfontana@mastodon.socialChristine Lemmer-Webber

cwebber@social.coop@richardfontana @bkuhn @ossguy In which of the 5 million ways I could parse that sentence do you mean it?

Glitzersachen

glitzersachen@hachyderm.io@josh @silverwizard @ossguy @bkuhn @karen @wwahammy

As far as I am concerned people should be "abused" for shilling AI from a position where they really don't have any sufficient insight. Like middle management trying to push AI on reluctant software engineers with all the tricks in the book (for example tying performance review results to AI use). This behavior destroys trust and workplace culture. What do they think? That the engineers don't understand their own work mode? The hubris of management: "I'll tell you how you can work better. I know better how you can work better."

And this behavior needs to be called out.

Josh Triplett

josh@joshtriplett.orgGlitzersachen

glitzersachen@hachyderm.io@josh @silverwizard @ossguy @bkuhn @karen @wwahammy

Agreed. I only want to make clear that it should not be clear flying for AI shills. A certain amount of actually painful headwind should meet them.

On the other side I consider what some middle management does to their employees with AI abuse (not sure about the apllicability of the English word here. I am not a native speaker).

And in line with Popper's paradox of tolerance I wonder whether abuser _in a position of power_ should really be countered with slight slap on the hand and mild mannered words.

After all it was this kind of behavior in such a power constellation that triggered revolutions historically. And I have a hard time rooting for the oppressors.

Kees Cook

kees@hachyderm.io@glitzersachen @josh @silverwizard @ossguy @bkuhn @karen @wwahammy @xgranade

I consider the cognition impairment hazards to overlap with the existing manipulation/critical-thinking hazards that capitalism depends on, with advertising being probably the most dangerous example (both explicit and implicit manipulation of many cognitive systems: confidence, selection, recency, etc etc).

IMHO LLMs are "just" a subset/extension of this existing problem. And I categorize it there because I think the defenses against their negative impacts are very similar.

Glitzersachen

glitzersachen@hachyderm.io@kees @josh @silverwizard @ossguy @bkuhn @karen @wwahammy @xgranade

> to overlap with the existing manipulation/critical-thinking hazards that capitalism

I think it's more, not only the manipulation part. LLMs actively corrode skills of the users. Not by by not using them. No, actually worse.

I hope you have heard about this possibility (whether you believe in it or not).

Kees Cook

kees@hachyderm.io@glitzersachen @josh @silverwizard @ossguy @bkuhn @karen @wwahammy @xgranade

> LLMs actively corrode skills of the users

Yup, very aware. It's a specific instance of what I still see as a larger critical thinking erosion happening all around us.

Glitzersachen

glitzersachen@hachyderm.io@kees @josh @silverwizard @ossguy @bkuhn @karen @wwahammy @xgranade

I suspect (as some scientists do as well) that this is not a cultural, but a neurological phenomenon. So I think this is really on a totally different level.

The skill erosion I am talking about has nothing to do AT ALL with critical thinking.

Best case it's just that if of two synaptic circuits (use the translation tool vs retrieve from memory) the one which wanted to activate, but then got not chosen, is actively deleted or weakened. My understanding is that this is how biological brains work / learn.

The worse alternative is that the output of LLMs has some yet not sufficiently described hidden quality which poisons neuronal networks that process them.

One hint in this direction is, that LLM models, who consume the production of other LLM models in training, collapse. That's a clear indicator that on some level LLM output is observably different from human language production, though we as humans have on average a hard time telling the difference.

And this is what I am talking about: Not a loss of cultural techniques or of learned skill by atrophy or not being taught anymore, but the poisoning of neuronal networks by input they cannot firewall because they have not evolved to recognize it as a hazard.

Kees Cook

kees@hachyderm.io@glitzersachen @josh @silverwizard @ossguy @bkuhn @karen @wwahammy @xgranade

Right, yeah, this is why I've cautioned people about *how* they use LLMs. You've distilled it more clearly and lines up with my own intuition that reminds me about how human memory systems work: retrieval is effectively erasure, so "remembering" requires retrieval and storage. Research into treating PTSD (IIRC?) and such found that blocking storage (with drugs or EM) and then triggering recall would wipe memories. You're describing a potentially purely experiential way to do this, which is terrifying.

I feel like using an LLM can lead to a Dunning-Kruger like effect, in that you think you know what it did, but you don't. And this belief satisfies the need/instinct to learn/know what happened without having actually done so. (Reminds me of making a TODO list and now the Dopamine hit from that kills the need to actually *do* the list.)

Kees Cook

kees@hachyderm.io@glitzersachen @josh @silverwizard @ossguy @bkuhn @karen @wwahammy @xgranade

I lump my experiences of software engineering use of LLMs into 3 modes:

1) "work together", I am watching everything it is doing, reviewing every step, and contributing to the result in tandem. This doesn't feel to me like anything is being eroded on my end. But I'm also a deep sceptic of its output.

2) "do the thing I know how to do for me", this is super dangerous, as I think I'm solving problems I am familiar with, but I didn't follow the results closely and I'm left with deep erosion of my comprehension of both problem and solution.

3) "vibe coding", I have no idea what it is doing with a thing I don't know about and I know I have no idea what it is doing. This doesn't seem to erode anything. It does create a new problem for me, though, if the LLM can't solve some problem because also neither can I.

I've felt #2 a few times, and I had the alarm bells in place to shift myself back to #1, which required doing full review and looking back through the reasoning and checking the work. The risk of being drawn into #2 is high given the sychophancy of the models, but I think my suspicion of it has helped avoid this a bit. 😅 (And perhaps I am more deluded than I think.)

#3 I have done for educational/amusement purposes, but it's an uncommon mode for me because what's the point of creating a thing I don't understand and can't fix?

("I can quit any time!")

Eric Schultz

wwahammy@treehouse.systems@kees @glitzersachen @josh @silverwizard @ossguy @bkuhn @karen @xgranade I think you are wildly underestimating the cognitive hazards. Like I hesitate to even say "wildly underestimating" because that phrase is not strong enough.

Bradley M. Kühn

bkuhn@copyleft.org@wwahammy @kees IMO you're both right.

LLM-backed gen. AI is a dangerous tool w/ potential to not only atrophy the skillsets of experienced developers *but also* lead newcomers to *never develop those skills*.

Our charge is to create policies that encourage extremely disciplined use of these systems.

I support decriminalization of recreational substances. But, such has to come with major funding for addiction support. IMO the analogy is apt.

@glitzersachen @josh @silverwizard @ossguy @xgranade

Glitzersachen

glitzersachen@hachyderm.io@bkuhn @wwahammy @kees @josh @silverwizard @ossguy @xgranade

Let me point you to my reply here => https://hachyderm.io/@glitzersachen/116421481982246037.

I really think the issue at the core *might* not be loosing skills by neglecting to exercise them, but rather poisoning of neural networks. Brainwashing them into (skill) oblivion.

The comparison to hard drugs would be apt, if this is true.

And our employers want us to ruin our skills and our brains. They obviously don't believe in a common future with their knowledge workers anymore...

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana Continuing here, because it's the relevant subthread.

I am sympathetic to choosing to narrow a topic. However, the post, in implying that we should start accepting partially AIgen contributions, inherently pulls in the topic of whether or not that is legally safe.

Yes, I have read the previous Conservancy post about the existing cases. This partly contributes to my surprise and confusion about the post.

Acknowledging that the plan is to have continued conversations and meetings about this, I still feel it is important to lay down my current concerns, even before such a meeting. I am leaving the "quality of contributions" and many other details out of here, and instead focusing on whether of not it is *safe to accept* contributions on copyright grounds at the moment, and what the implications of thinking on that are.

(cotd)

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana So the question is: is it safe, from a legal perspective, given the current state of uncertainty of copyright of such contributions, to encourage accepting such contributions into repositories?

Now clearly, many projects are: the Linux kernel most famously is, and their recent policy document says effectively, "You can contribute AI generated code, but the onus is on you whether or not you legally could have".

Which is not very helpful of a handwave, I would say, since few contributors are equipped to assess such a thing. I've left myself and three others addressed in this portion of the thread, and all of us *have* done licensing work, and my suspicion is, *especially* based on what's been written, that none of us could confidently project where things are going to go.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana Part of the problem here is that the AI companies have set the stage themselves. Their presumption is that it's fine to absorb effectively all open and "indie" content, and that this is entirely fair to pull into a model without any legal implications, whereas potentially yes, you may need to "license" something that looks like a Disney character. In the land of code, I also sense that Microsoft is perfectly fine with the idea that you can "copyright launder" a codebase from the GPL to perhaps the public domain, but if someone did that to their own leaked source code, they would be very upset.

Meanwhile, a friend of mine who works in films has said that he keeps hearing rumors that OpenAI would like a cut of stuff made with their stuff. We should presume tthat true.

Regardless, I'm sure everyone on this thread wants an *equitable* situation for proprietary and FOSS licensing. I'll expand on that more in a moment though.

Christine Lemmer-Webber

cwebber@social.coopHowever, it's not actually the laundering angle I am concerned with here entirely, it's whether we're turning FOSS codebases into potential legal toxic waste dumps that we will have a hell of a time cleaning up later.

The previous Conservancy post, which @bkuhn linked upthread, indicates that Conservancy does indeed consider the matter unsettled.

Current LLMs wouldn't "default to copyleft", since they also include all-rights-reserved mixed in there. If the result of output of these systems is a slurry of inputs which carry their licensing somehow, their default licensing output situation is one of a hazard.

I note that @bkuhn and @ossguy seem to be hinting at hoping a "copyleft based LLM" with all-copyleft output it a winning scenario. I'm going to state plainly: I believe that's an impossible outcome.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana Rather than focus on the GPL, let's choose a different copyleft license. In fact, let's choose a gradient of licenses.

- CC0's public domain declaration w/ minimal fallback license

- CC BY

- CC BY-SA

Imagine for a moment an LLM trained entirely on the above three licenses, and then one that's CC BY and CC0, and then one that's just CC0.

Let's look at both extremes and then we'll find out the real dangers come from observing the middle.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana First let's imagine the only-CC0 based LLM.

I would fully agree that no matter the law and legal case law passed and established, the CC0 based input LLM is clearly effectively in the public domain, or like CC0 itself, equivalent to it. This one is relatively simple.

Let's make things more complicated.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana Regarding the one containing CC0, CC BY, and CC BY-SA, the situation is more uncertain and seems highly affected by legal outcomes in upcoming law and cases to be set. There is the possibility that indeed, the LLM is considered a slurry of inputs and this is legally acceptable, and effectively any output which is not verbatim of its inputs in some way is effectively under the public domain.

Now, of course, the problem is that we don't have to just worry about the US, we have to worry *internationally*. When considered from this angle, that FOSS is an international endeavour, this hope that things are in the public domain feels a lot dicier.

The assumption is that then this effectively leads to the output being under the terms of CC BY-SA. This is fine, great even, right?! Because effectively everything is share-alike (Bradley I don't wanna get into whether BY-SA is copyleft or something weaker). We slap CC BY-SA on the output, it's fine. Right??????

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana Except, I actually believe this scenario isn't legally viable. And it's easier to understand if we scale back to the middle case.

Let's now look at the LLM trained on CC0 and CC BY. Because it's the BY aspect that makes everything complicated.

There is *NO WAY* in current LLM technology, nor I believe from studying how neural networks work, any viable computationally performant LLM, that they can track provenance. The BY clause cannot be upheld.

This isn't a theoretical concern for me; someone built another vibecoded Scheme-to-WASM-GC compiler that looks an awful lot like Spritely's own Hoot compiler in places. They didn't attribute us. They probably didn't know. But like many FOSS licenses, Apache v2 does require certain levels of attribution to be upheld. Most FOSS projects do.

You can't uphold the CC BY requirement, as far as I can tell.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana Now here is a counter-argument: how do people attribute Wikipedia? They generally just attribute Wikipedia! And people seem to be mostly fine with this.

It feels fine, when you were a contributor to the Wikipedia project.

It feels a lot less fine when you are a contributor to a specific project, to have everything just sucked up into "the generic LLM". Claude did it! Claude did it all by itself.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana If we are pushing for an *equitable* scenario for copyright output, there is only one "good outcome" in terms of copyright, and that is that everything is effectively in the public domain. The dream of having a "copyleft LLM" doesn't work.

And even if it did, there are several problems:

- Nobody is using that *now*, and contributors are facing contributions *now*, and there is legal uncertainty about accepting those contributions *right now*.

- It is unlikely that the "copyleft LLM" would be very useful. The way people use these tools is conversational in a way that requires them to effectively have to be trained on the entire internet to be functional. Not just copyleft codebases.

The copyleft LLM dream is a joke.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana I say "good outcome", and I'm not saying it's an outcome I want, because "what I want" is pretty complicated here. I'm saying, it's the only one where there is the possibility of legal output from these tools that can safely be incorporated into FOSS projects *at all* that is *equitable* for both FOSS and proprietary situations.

And yup, unfortunately, that would mean copyright-laundering of FOSS codebases through LLMs would be possible to strip copyleft.

It would also mean the same for proprietary codebases.

Frankly I think it would kind of rule if we stabbed copyright in the gut that badly, but there's so much vested interest from various copyright holding corporations, I don't think we're likely to get that. Do you?

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @richardfontana So let me summarize:

- Without knowing the legal status of accepting LLM contributions, we're potentially polluting our codebases with stuff that we are going to have a HELL of a time cleaning up later

- The idea of a copyleft-only LLM is a joke and we should not rely on it

- We really only have two realistic scenarios: either FOSS projects cannot accept LLM based contributions legally from an international perspective, or everything is effectively in the public domain as outputted from these machines, but at least in the latter scenario we get to weaken copyright for everyone.

That's leaving out a lot of other considerations about LLMs and the ethics of using them, which I think most of the other replies were focused on, I largely focused on the copyright implications aspects in this subthread. Because yes, I agree, it can be important to focus a conversation.

But we can't ignore this right now.

We're putting FOSS codebases at risk.

Lord Caramac the Clueless, KSC

LordCaramac@discordian.social@cwebber @bkuhn @ossguy @richardfontana I think we should just destroy copyright entirely and expand the public domain to contain everything that has ever been published. Intellectual property was a very bad idea in the first place IMHO.

Paul Sutton (zleap)

zleap@techhub.social@cwebber @bkuhn @ossguy @richardfontana

Indeed, big tech know full well the FLOSS / indie creators don't have the legal funds to defend. Their IP either.

Johan Nyström-Persson

jtnystrom@genomic.social@cwebber @bkuhn @ossguy @richardfontana Super interesting thread. Very helpful to spell out the problems like this.

Christine Lemmer-Webber

cwebber@social.coop@LordCaramac @bkuhn @ossguy @richardfontana If you are talking about my personal wishes, I would agree. Personally, I perceive of FOSS as a *reaction to* allowing copyright and other intellectual restrictions laws to apply to software.

This puts me at odds with some other copyleft advocates. I see copyleft as useful because it "turns the teeth of the machine against itself". If you have copyright, then great, we will use it to have a way to force the commons to stay open.

But it would be better to have no copyright at all, and if we could give it up, I would give it up.

But it's a far-fetched dream that it could happen. Maybe it will. I am not so sure. If it truly is possible to "copyright launder" any work through an LLM, we'd be as close to it as we ever could be.

But again, whatever scenario, in my view, has to be equitable. If it's possible to do that to GPL'ed software, it's only just to be possible to do it to any proprietary software, including reverse engineering binaries.

Riley S. Faelan

riley@toot.catNoisytoot

noisytoot@cwebber @LordCaramac @bkuhn @ossguy @richardfontana In a world without copyright (assuming no other changes), nothing would prevent people from withholding source code and attempting to restrict people’s freedom by technical means (DRM). On the other hand, it would also be entirely legal to reverse engineer everything and bypass the DRM.

Copyright should be removed, but DRM and providing binaries without source code should also be made illegal.

Also why is your post language set to de?Christine Lemmer-Webber

cwebber@social.coop@noisytoot @LordCaramac @ossguy @bkuhn @richardfontana I agree with you, and also have no idea why my post was set to DE.

silverwizard

silverwizard@convenient.emailEric Schultz

wwahammy@treehouse.systems@silverwizard @firefly_lightning @cwebber @ossguy as am I, it doesn't seem clear.

David Gerard

davidgerard@circumstances.run@wwahammy @silverwizard @firefly_lightning @cwebber @ossguy yeah, "great question! come over to crime scene 2 for an answer perhaps!" has never been a good look.

it was presented as human written text. The human who signs their name to it should be able to answer text-based questions about it in written form.

Denver Gingerich

ossguy@fedi.copyleft.org@davidgerard @wwahammy @silverwizard @firefly_lightning @cwebber Yes, which is why it's important to allow people to identify when they have used LLM/AI assistants to help. New contributors will see this is the norm, and then it will be easier to help them, because we'll know a bit about where any potential knowledge gaps might be coming from.

If we "ban" LLM/AI-assisted contributions, people will use them anyway but hide their use, which is a trickier problem to solve.

David Gerard

davidgerard@circumstances.run@ossguy @wwahammy @silverwizard @firefly_lightning @cwebber Liars who misrepresent their work are a social problem dealt with by banning the liar. This is not an excuse to let slop in.

Jon Sterling

jonmsterling@mathstodon.xyz@davidgerard @ossguy @wwahammy @silverwizard @firefly_lightning @cwebber @david_chisnall In fact, people lying about LLM usage may be the best outcome: code review continues as usual based on the merits of the contribution and track record of the contributor, deniability for the project, legal responsibility lies with the contributor, and any possible benefits of the LLM usage accrue to the project without challenging the norms of copyright and licensing.

Christine Lemmer-Webber

cwebber@social.coop@jonmsterling @davidgerard @ossguy @wwahammy @silverwizard @firefly_lightning @david_chisnall It's a perverse incentive situation that this may be true. I can't say I'm comfortable with it, though.

infinite love ⴳ

trwnh@mastodon.social@cwebber @bkuhn @ossguy @richardfontana how do you launder proprietary codebases if the source isn't available? i just see this as 2 negatives since it would incentivize trade secrets

Denver Gingerich

ossguy@fedi.copyleft.org@cwebber @LordCaramac @bkuhn @richardfontana Sadly it will be years before we have an answer re copyright and we can't wait for that. Outlining usage in the meantime is the best we can do, in case we need to do something with that later.

We know proprietary software companies are using these tools extensively, so this is in effect a mutually assured destruction situation. While we wait, we should make sure that we are pushing freedom on all other axes, since they won't do that part.

Bradley M. Kühn

bkuhn@copyleft.orgI agree with @ossguy in particular because if *we* are copylefting our code (even if assisted by #LLM-backed gen-#AI), we won't face a copyleft claim later.

Furthermore, it is highly unlikely these LLMs are (a) trained on proprietary software, and (b) any proprietary software company that so-trained would later claim infringement.

#Microsoft has all but admitted they refuse to train Copilot on their own code anyway.

Jens Ohlig

johl@mastodon.xyz@cwebber @bkuhn @ossguy @richardfontana Giving a link to a Wikipedia page lets you look up the version history with every single contributor from the first byte of the article. You don’t have to list 10,000 names to satisfy CC BY, you just have to provide a link to a page that does. An LLM doesn’t and cannot do that.

Lord Caramac the Clueless, KSC

LordCaramac@discordian.social@noisytoot @ossguy @bkuhn @richardfontana @cwebber because I always forget to check the language in the Android app, and it defaults to the system language

Lord Caramac the Clueless, KSC

LordCaramac@discordian.social@cwebber @noisytoot @ossguy @bkuhn @richardfontana Mine are often set to De because that's my system language, and I usually forget to check the language in the Android app

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @LordCaramac @richardfontana

- There are plenty of FOSS projects we care about which are not under copyleft. What terms should they consider received code under? Should SDL now consider all LLM based output under the GPL? The AGPL? Which? Do you expect such a project to switch its license to copyleft now?

- Microsoft's proprietary code may not be, but plenty of proprietary code is available under extremely non-FOSS and restrictive licenses which are within datasets we are getting contributions from *today*

- The mutually assured destruction "safe option" isn't that things are under copyleft for proprietary companies though, that's still a losing scenario for them. So that doesn't help the case for copyleft, only accepting that LLM output under the public domain is (which we don't know)

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @ossguy @LordCaramac @richardfontana It's somewhat of an aside, but my point regarding regarding Microsoft's codebase is not that Windows' code is in the inputs (this is true), my point was about a more interesting test for licence laundering is to launder a *leaked* proprietary codebase. If it's possible to copyright launder GPL'ed code, the equitable thing is that we should be able to copyright launder proprietary code. But again, that's somewhat of a tangent from the main points.

Christine Lemmer-Webber

cwebber@social.coop@trwnh @bkuhn @ossguy @richardfontana Plenty of Microsoft code has been released under "shared source" licenses and also leaks

Jens Finkhäuser

jens@social.finkhaeuser.de@cwebber @bkuhn @ossguy @richardfontana Worse IMHO is that we're putting FOSS as a movement at risk if we deskill everyone to the point where you either pay money to have code generated for you, or there is no code.

Christine Lemmer-Webber

cwebber@social.coop@jens @bkuhn @ossguy @richardfontana This is indeed a serious risk, though tangential to this subthread. But it's a concern I also have.

infinite love ⴳ

trwnh@mastodon.social@cwebber @bkuhn @ossguy @richardfontana sure, but my point is this would happen less often

Jens Finkhäuser

jens@social.finkhaeuser.de@cwebber @bkuhn @ossguy @richardfontana Fully tangential, agreed.

Richard Fontana

richardfontana@mastodon.socialChristine Lemmer-Webber

cwebber@social.coop@richardfontana @bkuhn @ossguy Glad to hear we agree there!

Evan Prodromou

evan@cosocial.caAre you concerned that the LLMs generate nontrivial verbatim excerpts of copyrighted works?

Or that there is a hidden "intellectual property" in the deep patterns that they use?

Say, when an LLM was trained on a file I made with an interesting loop structure, and it emits code with a similar loop structure, even if the variable names, problem domain, details, or programming language differ.

What if a court says I can demand royalties for my "IP"?

Berkubernetus

fuzzychef@m6n.io@cwebber @bkuhn @ossguy @richardfontana

Based on my following of current legal cases, I think it's entirely possible that in a year or two we'll suddenly be rolling large OSS codebases back to 2023. And won't that be fun!

Evan Prodromou

evan@cosocial.ca@cwebber @bkuhn @ossguy @richardfontana

Like, not copyrightable, not patents, but some secret third thing, kind of what people mean when we say that someone "copied our idea".

Christine Lemmer-Webber

cwebber@social.coop@evan @richardfontana I am saying we don't know the answer to that question, and it seems that @bkuhn and @ossguy agree that we don't know the answer to it, based on previous posts, and the lack of knowledge about what the copyright implications of LLM based contributions means that we are creating a schrodingers-licensing-timebomb for our FOSS codebases

Christine Lemmer-Webber

cwebber@social.coop@evan @bkuhn @ossguy @richardfontana I am talking about copyright

mirabilos

mirabilos@toot.mirbsd.org@cwebber @bkuhn @richardfontana wouldn’t be surprised if that was one side goal of the fashtechbros

Christine Lemmer-Webber

cwebber@social.coop@evan @bkuhn @ossguy @richardfontana Say for a moment that we *did* make a model which intentionally pulled in leaked source code from various proprietary codebases.

What would your opinion be on the legal-hazard state of accepting that code output? Would you consider it relatively safe from a copyright perspective?

mirabilos

mirabilos@toot.mirbsd.org@richardfontana @cwebber @bkuhn this is also one of the arguments I’ve been presenting since early on about why permissively-licenced works are still forbidden for "genAI".

And even for a purely trained on GPLv3 LLM, reproducing the copyright notices alone would be fun. You’d have to attach the sum of all of those in the input to every output or be in breach.

Christine Lemmer-Webber

cwebber@social.coop@richardfontana @bkuhn @ossguy That's a problem so hard it throws the "NP complete" debate out the window in favor of something brand new. Given that these codebases have no trouble "translating" from one language's source code into another, how on *earth* could you possibly hope to build a compliance tool around that?

Laughable, to anyone who tries.

Stefano Zacchiroli

zacchiro@mastodon.xyz@cwebber @bkuhn @ossguy @richardfontana

My current answer to your "is it safe" question is to answer a slightly different question. Namely: "is it any less safe than accepting code from a random employee that claims to be submitting under a inbound=outbound regime, whereas in fact they cannot?". The latter we have been doing for decades, with limited damages to the commons.

(I *also* think the legal odds are more in our favor with AI-assisted contributions than in the previous case.)

Christine Lemmer-Webber

cwebber@social.coop@zacchiro @bkuhn @ossguy @richardfontana While true, there is a big difference in that the previous scenario was someone out of compliance with what the community actually accepted as hygienic and acceptable contributions, and those contributions were relatively rare.

Saying that we don't need to worry about the risks from these tools right now from a licensing situation is different: it's advising on a path being acceptable where we *don't know* whether or not it's generally safe practice to recommend! And which most in this thread seem to agree we don't know. Even your post seems to say "it seems like it'll probably be okay and end up in our favor".

I guess I feel increasingly like I am maybe the only "oldschool FOSS licensing wonk" who cares about this, and maybe that means I should just give up.

But *damn* I can't believe it feels like when people are both saying "we don't know what the implications will be" we're also saying "so go ahead and say those patches are a-ok!"

Christine Lemmer-Webber

cwebber@social.coop@richardfontana As said here, given the "translation between languages" aspect, I can't really see that as likely to be true https://social.coop/@cwebber/116426770262334234

Which maybe that means that all this stuff really is public domain, a position I am *fully willing to accept*! But I don't think it's known (especially internationally), and I don't think @bkuhn or @ossguy are eager to adopt that perspective

Evan Prodromou

evan@cosocial.caThis is probably a healthy concern.

I think there might be some good ways to hedge one's bets, though.

Use LLMs for rubber ducking, code scanning and review, rather than code generation.

Keep LLM code contributions minimal and unremarkable, too.

Don't make them load-bearing. If the code is central to the program, it's too unique.

Christine Lemmer-Webber

cwebber@social.coop@evan @richardfontana @bkuhn @ossguy Yeah! I actually already said elsewhere in the thread I don't think we need to worry about using these tools for such scenarios from a *licensing* perspective, only when the genAI is explicitly checked into the codebase

Andrew Wooldridge ⛰️

triptych@social.lol@evan @cwebber @richardfontana @bkuhn @ossguy this is wisdom

Evan Prodromou

evan@cosocial.caI think the worst case scenario is that the inserted code matches exactly one snippet in the training data.

So you could try to go for zero matches, by using such idiosyncratic and unrecommended coding conventions that nobody else has code like yours.

Or you could try to go for lots of matches, by using bog standard coding conventions and software patterns.

Christine Lemmer-Webber

cwebber@social.coop@evan @richardfontana @bkuhn @ossguy Sorry, I missed a word when I edited the sentence, I meant "genAI output"

Evan Prodromou

evan@cosocial.caBut maybe that's wrong; I don't know. Maybe if I wrote a Person.setName() method that was in the training set, and the LLM generated an identical Person.setName() code snippet for someone else, I could claim that the code is a copyright violation, even if there were thousands of other identical and independent Person.setName() methods in the training set.

Brian Swetland

swetland@chaos.socialRE: https://social.coop/@cwebber/116426072468056316

I come at this from a direction of "this AI stuff is garbage and I don't want it anywhere near me or in any codebase I depend on", but Christine's point that the copyright/license situation is an enormous minefield (even if you think the code quality is not an issue, even if you don't believe there are moral or ethical issues, etc) is worth keeping in mind as well.

It is, of course, unsurprising to me that "who cares as long as it 'works'" slop-coders don't give a shit about such concerns.

Stephen Foskett

sfoskett@techfieldday.net@evan @cwebber @bkuhn @ossguy @richardfontana Another major concern is that works generated by AI are not copyrightable per the US Supreme Court. So code generated by an LLM can not be licensed at all, open or closed. https://www.reuters.com/legal/government/us-supreme-court-declines-hear-dispute-over-copyrights-ai-generated-material-2026-03-02/

prom™️

promovicz@chaos.social@evan That’s not enough code for copyright enforcement. People have been finding identical code in the output - you just need something “rare”. It’s similar for subjects with little text in the corpus - I’ve been seeing listings that *can only have one source* (retro datasheets by AMD, in my case).

Evan Prodromou

evan@cosocial.caThis is a really interesting question! TIL about CA vs. Altai and the abstraction-filtration-comparison test.

I'm not sure how automatable it is. Interesting to try though!

Christine Lemmer-Webber

cwebber@social.coop@sfoskett @evan @bkuhn @ossguy @richardfontana That outcome I am not worried about; code that's not copyrightable is considered in the public domain within the US, which means there aren't any real risks to incorporating into FOSS projects. But the Supreme Court punted on it, they didn't rule that way.

Evan Prodromou

evan@cosocial.ca@sfoskett you can incorporate public domain code into a licensed work.

Evan Prodromou

evan@cosocial.ca@richardfontana @cwebber @bkuhn @ossguy Yeah, I thought my job couldn't be automated, either, and yet here we are.

Evan Prodromou

evan@cosocial.ca@richardfontana @cwebber @bkuhn @ossguy Seriously, though, a lot of the work seems like it is tractable to LLM automation?

Like, the abstraction part seems like it's just summarizing components at the function, module, and program level. This is the command-line argument parser, this is the database abstraction layer, this is the logging module. LLMs are pretty good at this!

Stephen Foskett

sfoskett@techfieldday.net@evan @cwebber @bkuhn @ossguy @richardfontana Ok I haven’t really heard people before you guys explain that to me. So I was wondering if it was possible that it couldn’t be licensed. Thanks.

Richard Fontana

richardfontana@mastodon.socialEvan Prodromou

evan@cosocial.caFor filtration, it seems like merger or scènes à faire would also be kind of automatable, maybe with human oversight. Is there a way to make a mailing daemon without a logging module? Maybe, but it's so common that everyone does it that way. Could you have a Person class without a getter and setter for the name? Probably not?

Evan Prodromou

evan@cosocial.caThe comparison seems tough, but I'd put an LLM to the task. "How similar are the database abstraction layers in activitypub-bot and Fedify?" Again, I'd probably want some human review, but for that code stuff LLMs are pretty good.

Eric Schultz

wwahammy@treehouse.systems@ossguy @cwebber @LordCaramac @bkuhn @richardfontana proprietary software companies extensively use GitHub and yet SFC's position is "don't use GitHub".

There are so many things we do in free software and in the interactions with SFC and FSF that would be simpler if we used proprietary software. How many janky experiences have people been asking to tolerate to participate? Why shouldn't we use proprietary software there?

Evan Prodromou

evan@cosocial.caI consider myself an expert on this process since I learned about it 45 minutes ago, but it seems like AFC follows the hierarchical layers of modern programming-in-the-large -- statements, functions, modules, packages, program. That is the stuff that LLMs handle pretty well.

Bradley M. Kühn

bkuhn@copyleft.orgI actually think that these copyright concepts aren't particularly automatable, and even if we try, its pure arms race.

And the merger doctrine isn't the big problem here, it is the more complex analysis where merger doctrine clearly doesn't apply that needs analysis and I suspect the analysis is difficult to (even partially) automate.

But I'm looking into it.

Cf: chardet situation https://github.com/chardet/chardet/issues/355#issuecomment-4145369025

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @evan @richardfontana @ossguy One thing I worry about is that the chardet rewrite might not generalize. The chardet maintainer used *more* care in the rewrite than most projects which have followed suit for laundering would. https://dan-blanchard.github.io/blog/chardet-rewrite-controversy/

Even then, it raises questions, because even the maintainer admits, chardet was part of the training set.

It's very similar to how a friend recently sent me, "Claude managed to reverse engineer Bubble Bobble without using any reverse engineering tools, just inspecting the binary!" https://kotrotsos.medium.com/we-pointed-an-ai-at-raw-binary-files-from-1986-662ba30120f3

Which like, Claude is enough of a black box already but Bubble Bobble is also one of the most studied ROMs in history, so that's hard to evaluate whether it's true. You'd have to choose a less studied ROM as a test case, not Bubble Bobble, which the internet has discussed to death.

Stephen Foskett

sfoskett@techfieldday.net@richardfontana @evan @cwebber @bkuhn @ossguy I feel like it’s 3 questions for the court:

1 Can a non-human actor produce a copyrightable work? Likely no.

2 Is the human prompt and review enough to apply copyright to LLM content? Maybe?

3 Does this have implications for open source? I guess not.

Christine Lemmer-Webber

cwebber@social.coop@bkuhn @evan @richardfontana @ossguy Probably a ton of people here think I am anti-AI-output, and that I would be upset to find out that the chardet rewrite were legal.

Actually, I'm not! I'd be fine with the ability to copyright launder software to some degree, as long as we could do the same for proprietary software (including in binary form).

I'm concerned about whether or not we have an *equitable* situation, though. And I'm *more concerned* that we need to advise people, who are incorporating code *today*.

Evan Prodromou

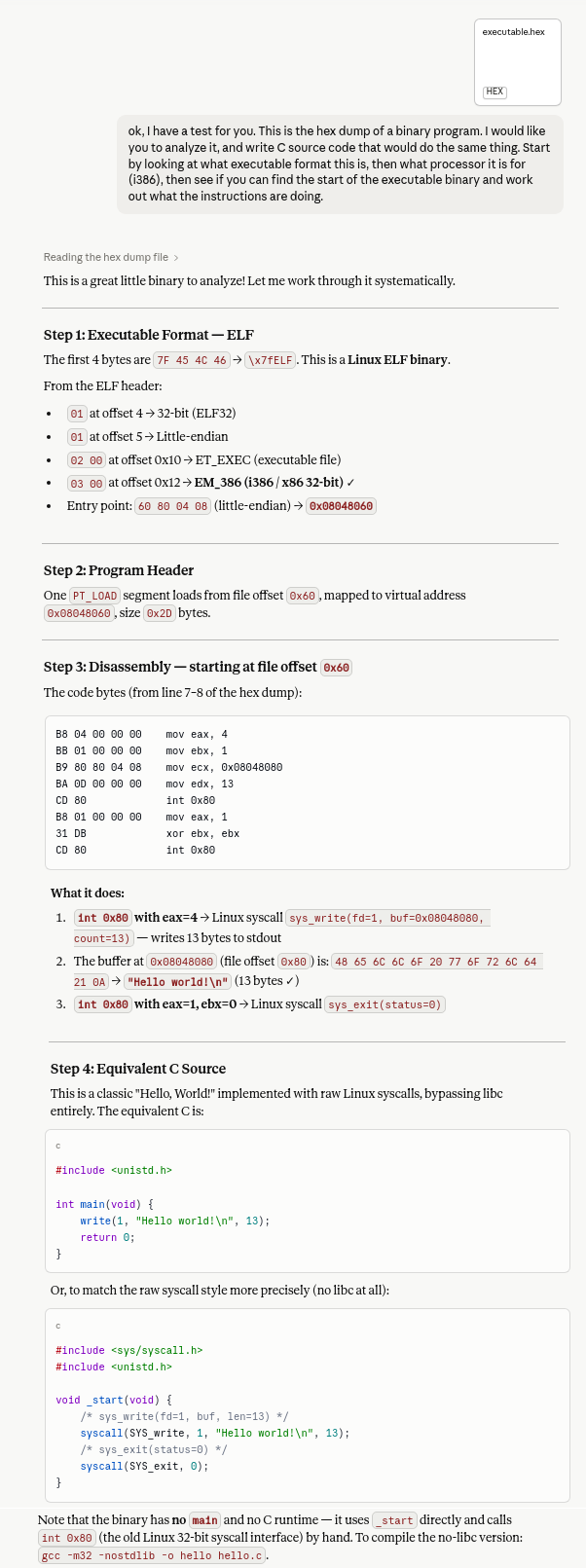

evan@cosocial.ca@bkuhn I just did an abstraction and filtration pass on a medium-sized application framework (~30K LOC), and as an expert on the code I think it did a good job:

https://claude.ai/share/071ccb69-5d22-4673-905a-362d9663e7d0

It missed a few things (e.g. relay specs). Then again, I have no idea how this kind of review is supposed to work. I didn't go down to the function or statement level -- that'd probably be much noisier.

Maybe chardet 2 and 7 would be a better test of the technique?

Richard J. Acton

RichardJActon@fosstodon.org@cwebber @bkuhn @ossguy @richardfontana I'd don't see a great way out of the copyright stripping conclusions for them without changes to the law. As I understand their defense for training on copyrighted materials - it's predicated on the models being a "transformative" and not competing directly with the original works in the market. The models themselves don't compete with the training material only their outputs do - and the LLM companies want any liability for that to be on users not them.

Richard J. Acton

RichardJActon@fosstodon.org@cwebber @bkuhn @ossguy @richardfontana Under this view it doesn't matter how the training data was licensed as it's a fair use defense. The outputs being uncopyrightable / effectively public domain allows people to claim they wrote it when it's convenient and they want to be able to copyright it as it's hard to prove if it was AI generated or human authored. And simultaneously to claim that it was the output of and LLM when they want to strip inconvenient licensing terms.

Evan Prodromou

evan@cosocial.caIf I were going to productize this, I'd do AF passes on a huge training dataset like The Stack and generate some kind of fingerprint for each program. (Estimated cost: billions!)

https://huggingface.co/datasets/bigcode/the-stack

Then, I'd have a tool to let you fingerprint your own code and C it against the big database -- maybe give you a list of high-similarity codebases.

And you could re-run the comparison each time you push to Git -- maybe only Cing what changed.

Evan Prodromou

evan@cosocial.caI gave it a try. It's quite wordy! Claude thought that a lot of Pilgrim's work would be filtered since it was a direct port from the Mozilla C++ codebase. I pushed back that they shared the same license, and it loosened up that constraint.

https://claude.ai/share/e4aae73c-14d1-462e-9773-4381adde54f7

Warning: if you read this document, it will get AI in you, and it will make you AI and you will become an AI-booster like me and Sam Altman. It will also burn down the rainforest.

Evan Prodromou

evan@cosocial.caI think you could make the case that Claude is not an uninterested party in this discussion, since Blanchard used Claude to generate the code, so maybe it's lying to cover up its tracks.

Evan Prodromou

evan@cosocial.caI might ask ChatGPT to give it a try, and give it some extra incentive to dig deeper because if it digs up some dirt on Claude it'd be good for business.

Bradley M. Kühn

bkuhn@copyleft.org@evan 🤣 … but I know you're only half joking.

Frankly part of the problem here is that people are either taking this situation *too* seriously or not serious enough. I'm guessing you're right in the happy medium, but your comment made me think of that point.

Bradley M. Kühn

bkuhn@copyleft.org@evan